Cloud

29 November, 2017

/ 1 Comment

AWS Sydney Partner Day and Summit Roundup

A few weeks ago, I was lucky enough to head up to Sydney to attend the AWS Partner day and AWS Summit. In this post I'll summarise how it all went and some of the impressions I had.

02 May, 2017

/ 2 Comments

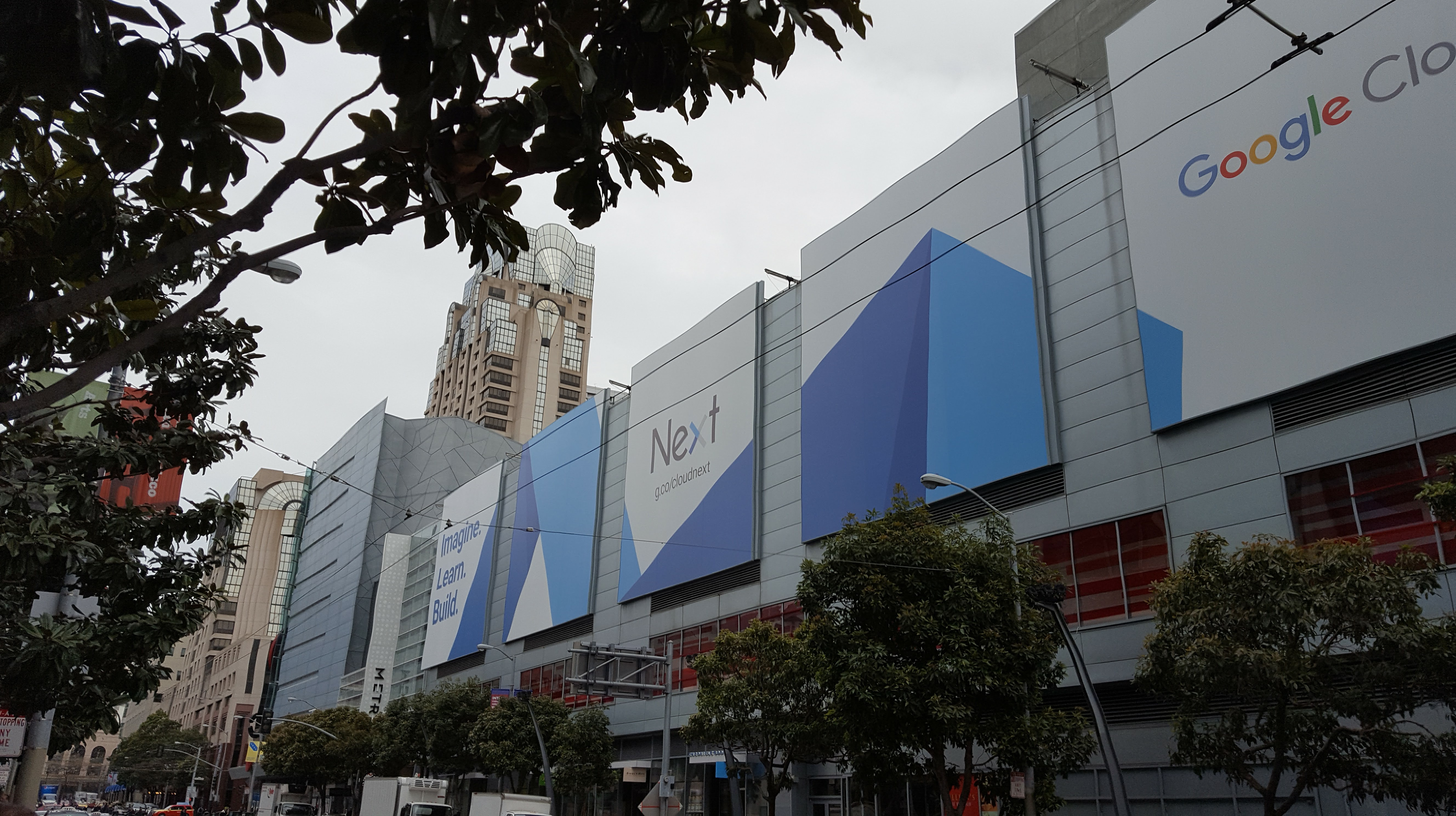

Cloud Next 2017 – Shifting to the Cloud

Last week I had the privilege of attending Google Cloud Next in San Francisco. With Google finally due to open a datacenter in Australia this year, it was certain to be a great opportunity to learn about what's next with Google Cloud.From the moment I arrived at the baggage carousel at San Francisco International Airport, I was swamped with advertising for the conference. It was clear that Google is really pushing their cloud platform to as many developers as possible. This left me really excited for what was about to come over the following week. In this post I'm going to try and sum up how it all went.

14 March, 2017

/ 1 Comment

Shiners submit entries to the AWS Serverless Chatbot Hackathon

Shine's Cliff Subagio, Stephen Shim and Michael Diender recently submitted a couple of entries to the recent AWS Serverless Chatbot Hackathon that we think are pretty cool.

11 October, 2016

/ 1 Comment

Shiner to present at very first YOW!Data conference

Shine's very own Pablo Caif will be rocking the stage at the very first YOW! Data conference in Sydney. The conference will be running over two days (22-23 Sep) and is focused big data, analytics, and machine learning. Pablo will give his presentation on Google BigQuery,...

31 August, 2016

/ 0 Comments

A Deep Dive into DynamoDB Partitions

Databases are the backbone of most modern web applications and their performance plays a major role in user experience. Faster response times - even by a fraction of a second - can be the major deciding factor for most users to choose one option over another. Therefore, it is important to take response rate into consideration whilst designing your databases in order to provide the best possible performance. In this article, I’m going to discuss how to optimise DynamoDB database performance by using partitions.

27 June, 2016

/ 8 Comments

Orchestrating Tasks Using AWS SWF

Lately I've been doing a lot of work managing batch-processing tasks. Broadly speaking, there are 2 types of trigger for such a task: time-based and logic-based. The former can be easily done by cron/scheduled jobs. The latter can be a bit tricky, mostly because it can involve dependencies on other tasks. In this post, I will talk about how I've been using the AWS Simple Workflow service (SWF) to take some of the headache out of orchestrating tasks.

20 May, 2016

/ 2 Comments

NoSQL in the cloud: A scalable alternative to Relational Databases

With the current move to cloud computing, the need to scale applications presents itself as a challenge for storing data. If you are using a traditional relational database you may find yourself working on a complex policy for distributing your database load across multiple database instances. This solution will often present a lot of problems and probably won’t be great at elastically scaling.As an alternative you could consider a cloud-based NoSQL database. Over the past few weeks I have been analysing a few such offerings, each of which promises to scale as your application grows, without requiring you to think about how you might distribute the data and load.

With the current move to cloud computing, the need to scale applications presents itself as a challenge for storing data. If you are using a traditional relational database you may find yourself working on a complex policy for distributing your database load across multiple database instances. This solution will often present a lot of problems and probably won’t be great at elastically scaling.As an alternative you could consider a cloud-based NoSQL database. Over the past few weeks I have been analysing a few such offerings, each of which promises to scale as your application grows, without requiring you to think about how you might distribute the data and load.

19 February, 2016

/ 0 Comments

Messages in the sky

One of the projects that I'm currently working on is developing a solution whereby millions of rows per hour are streamed real-time into Google BigQuery. This data is then available for immediate analysis by the business. The business likes this. It's an extremely interesting, yet challenging project. And we are always looking for ways of improving our streaming infrastructure.As I explained in a previous blog post, the data/rows that we stream to BigQuery are ad-impressions, which are generated by an ad-server (Google DFP). This was a great accomplishment in its own right, especially after optimising our architecture and adding Redis into the mix. Using Redis added robustness, and stability to our infrastructure. But – there is always a but – we still need to denormalise the data before analysing it.In this blog post I'll talk about how you can use Google Cloud Pub/Sub to denormalize your data in real-time before performing analysis on it.

One of the projects that I'm currently working on is developing a solution whereby millions of rows per hour are streamed real-time into Google BigQuery. This data is then available for immediate analysis by the business. The business likes this. It's an extremely interesting, yet challenging project. And we are always looking for ways of improving our streaming infrastructure.As I explained in a previous blog post, the data/rows that we stream to BigQuery are ad-impressions, which are generated by an ad-server (Google DFP). This was a great accomplishment in its own right, especially after optimising our architecture and adding Redis into the mix. Using Redis added robustness, and stability to our infrastructure. But – there is always a but – we still need to denormalise the data before analysing it.In this blog post I'll talk about how you can use Google Cloud Pub/Sub to denormalize your data in real-time before performing analysis on it.

19 October, 2015

/ 0 Comments