26 Mar 2015 Scurvy, A/B Testing, and Barack Obama

It’s being almost 3 months since I start implementing A/B tests for one of our clients and I have to say I am enjoying it a lot.

A/B testing is very powerful technique. Not only does it increase your web site conversion rates, it also promotes innovation and encourages data-driven solutions.

In this article I will give an introduction to A/B testing by asking an important question: what have scurvy, A/B testing and Barack Obama all got in common?

Scurvy

Let’s start by travelling back in time a few centuries.

It’s May 1747 and James Lind, a Scottish surgeon who works in the Royal British navy, is trying to figure out what causes scurvy. If you haven’t heard of it, it’s a nasty affliction which causes crew members to lose their teeth and sometimes even die.

We know now that scurvy results from a deficiency of vitamin C, but back in the 18th century they didn’t even know what a vitamin was.

However, there were a number of theories as to what would remedy scurvy, one of which involved eating citrus fruits.

To answer the question once and for all, Lind decided to perform an experiment which is now believed to have been the first proper clinical trial:

“Lind allocated two men to each of six different daily treatments for a period of fourteen days. The six treatments were: 1.1 litres of cider; twenty-five millilitres of elixir vitriol (dilute sulphuric acid); 18 millilitres of vinegar three times throughout the day before meals; half a pint of sea water; two oranges and one lemon continued for six days only (when the supply was exhausted); and a medicinal paste made up of garlic, mustard seed, dried radish root and gum myrrh.

The most sudden and visible good effects were perceived from the use of oranges and lemons;”[ref]

His experiment scientifically proved that eating lemon and oranges cured scurvy. Lind published the results and within a few decades scurvy was eradicated.

Lind’s experiment epitomised what a clinical trial is all about: testing different approaches under equal conditions, without bias, then measuring the results and proving (or disproving) your hypothesis.

A/B Tests

An A/B test is a clinical trial performed on a web site, followed by a statistical analysis of the results. It’s a trial where we determine whether, by making certain changes in our site (usually design or copy changes), we can affect our user’s behaviour. Examples of behaviours we might be interested in are people viewing particular pages, clicking on a desired button, joining e-mail list distributions or buying online products.

A/B tests are also known as split tests or randomised experiments. The main advantage of A/B tests is that, by adopting a more data-driven development focus, we’re actually measuring with real customers the efficacy of proposed changes.

This gives a chance to ideas that our inherent biases or ill-formed opinions may have otherwise ruled-out. Furthermore, with every A/B test, we are learning from the data that we are collecting. It teaches us about our users behaviour and their preferences, forming a feedback loop that keeps improving our web site.

The arrival of a bunch of tools in the market has madeA/B testing cheaper to do than ever – far cheaper than user-testing sessions or implementing cross-site redesigns.

Let’s illustrate the concept with few quick case studies from ContentVerve.com.

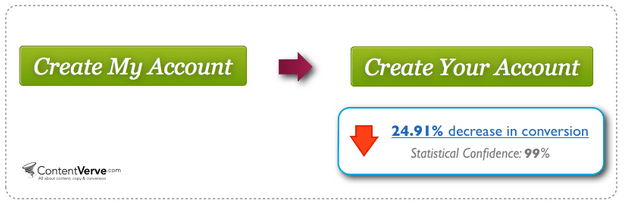

The first shows that, by labelling a button with more possessive wording (‘Create My Account’, as opposed to ‘Create Your Account’), you can in-turn help the customer more personally identify with the proposed action, and thus make them more inclined to click:

The second example demonstrates how a button that offers multiple actions makes a customer more inclined to convert:

Finally, consider this interesting case-study from Netflix:

Check the results here.

Barack Obama

Yes, finally: Obama! Everybody who does A/B testing knows this story. It involves Dan Siroker, one of the founders of Optimizely (an A/B testing software company) and US president Barack Obama. Siroker was approached by the Obama presidential campaign team for the 2008 election to help them use analytics data to make better decisions on the campaign.

He started with a simple A/B test on their splash page using Google Website Optimizer. It was a multivariate test that combined different variations of buttons and media. The goal was to see which variation maximised the amount of people signing up as email campaign subscribers, which in-turn would lead to donations.

Here’s a quick overview of the different variations:

Which combination of button and media do you think was the winner? The favourite from the guys who were running the Obama campaign at the time of launching the experiment was one of the videos. But here are the results.

“Ok, I want A/B tests, but where do I start?”

The A/B testing market is booming with all sorts of tools and pricing. It doesn’t matter whether your needs are big or small, or you’ve got no money or plenty of budget, or you are an engineer or a marketing guy… you’re in luck! Here are some of the more popular options:

Worth mentioning: Google Content Experiments is free. And if you are wondering which is the most popular offering, trends.builtwith.com tells us that Optimizely is the hottest at the moment:

Tips and Traps

Ok, we are almost done with this quick introduction to A/B testing. I hope you found it interesting, because I truly enjoy working with it. I’d love to finish with some tips if you want to get started with A/B testing:

- Do your homework. Know the basic statistical terms. Here’s an this excellent article to really understand concepts such as statistical confidence, statistical power, null hypothesis, A/A tests and sample size.

- Carefully plan and design your tests. If possible, try to involve and engage other’s ideas and suggestions. Avoid launching random tests, instead designing a clear roadmap with the help of UX designers, marketing people and technical people.

- Understand your results. Don’t stop a test before the agreed time and only accept results that are statistically significant. And even if you ran a test with a clear winner, run the experiment again another time, to check you get consistent results.

And finally: don’t be your worst enemy. If you don’t understand or like how the results look for a certain experiment, don’t get upset! There is a reason for everything. Try to run more tests to gain insight and understanding of your customers.

Arthur

Posted at 08:43h, 27 MarchGreat article Fernando!

Damien

Posted at 11:37h, 30 MarchThanks for your feedback Fernando. If you are around Sydney next week, I’ll be happy to talk about CRO for the CRO day http://www.eventbrite.com.au/e/international-cro-day-meetup-sydney-tickets-16326525076